The Chat & Ask AI Leak Shows Why I Built Governance First

When 404 Media's story about Chat & Ask AI leaking 380 million private messages dropped into my feed on 29 January, I didn't feel surprised. I felt vindicated.

I didn't predict this specific leak. I predicted this pattern would continue. I'd spent the last few months building Aqta expecting exactly this failure mode. Not because I'm psychic, but because I've seen this pattern destroy trust at Meta, watched it play out at TikTok, and knew it would hit consumer AI next.

Most AI development today is "vibe coding" — the same "move fast and break things" mentality that created the Facebook/Cambridge Analytica scandal5, now applied to systems handling suicide notes and medical questions. Ship the shiny interface first, worry about security and governance later. The Chat & Ask AI leak is what happens when "later" never comes.

When I started building Aqta, I knew I didn't want that approach. I wanted an AI governance platform that shows its work, not just what it thinks. That forced an uncomfortable constraint: build something I would actually trust with my own messy thoughts and half-formed questions.

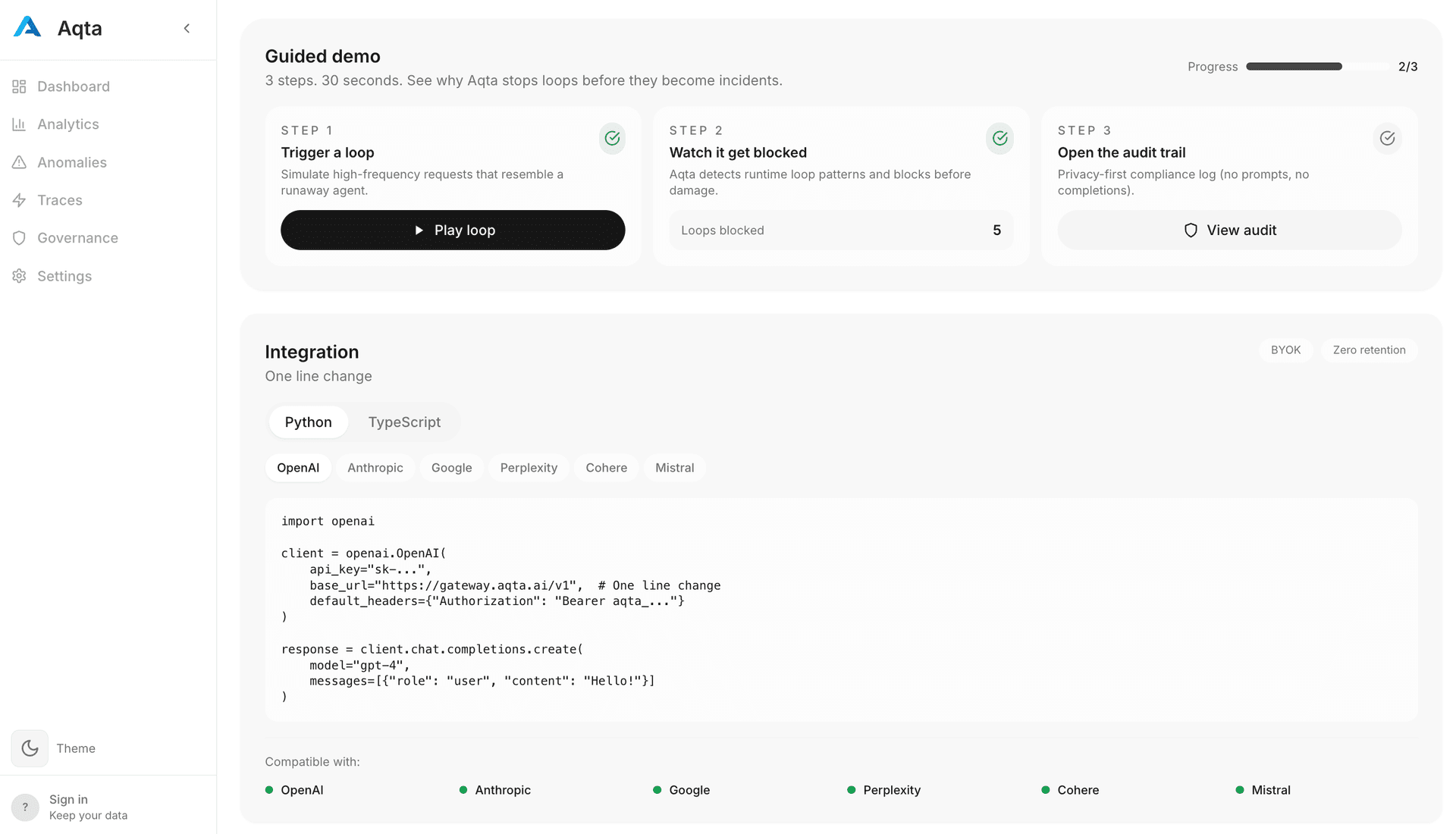

Which meant building Aqta, the governance layer, first. Not because governance prevents infrastructure misconfigurations — that's DevOps. But because the same culture that skips security basics also skips audit trails, policy versioning, and accountability frameworks.

What Aqta Actually Is

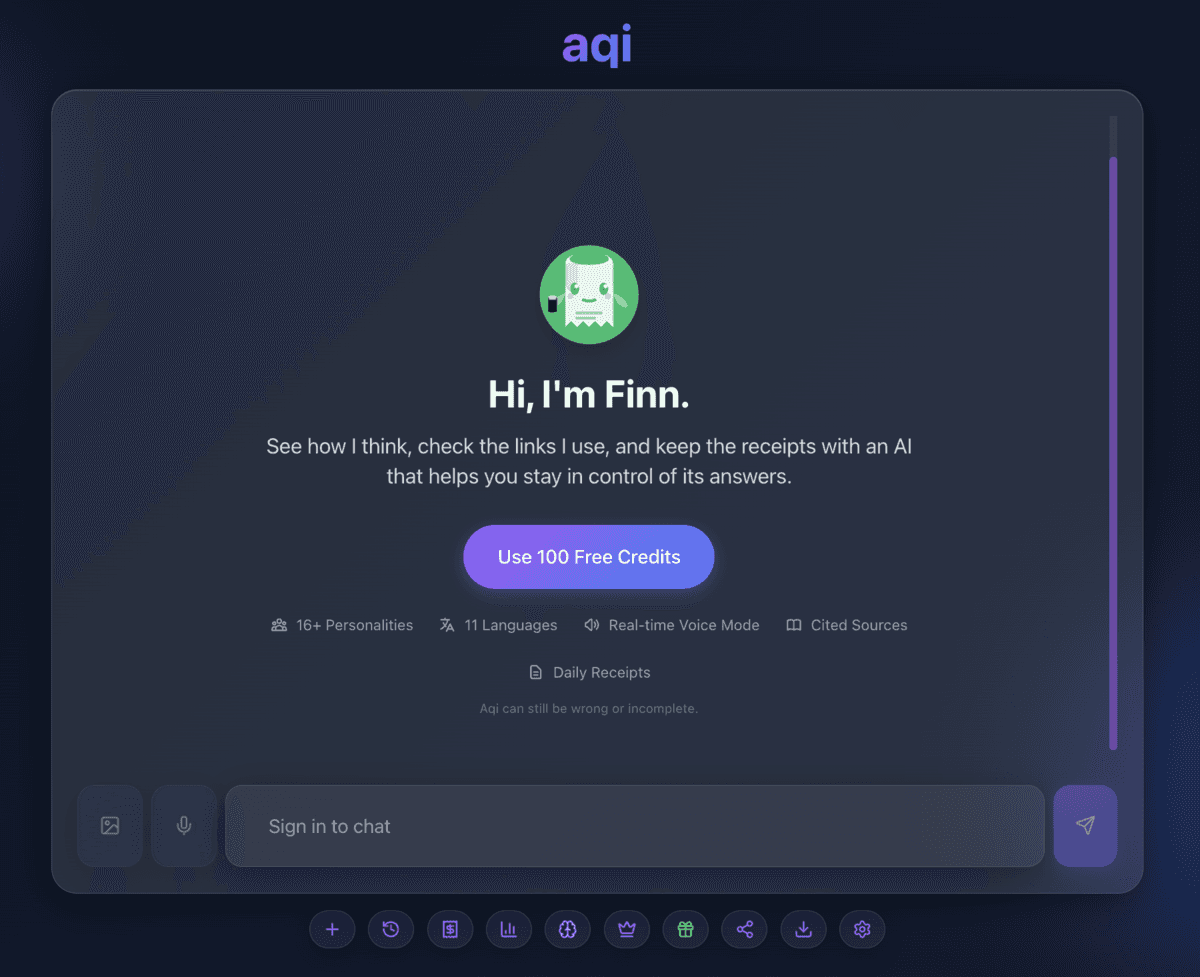

Aqta is the governance infrastructure I built first. It's a privacy-first AI governance platform for enterprise teams deploying AI in healthcare, finance, and regulated industries. But to prove the architecture worked, I also built Aqi — a consumer AI chat app that runs on Aqta's governance layer.

Think of it this way: Aqta is the governance platform (B2B). Aqi is the AI companion that proves it works (B2C). Both share the same metadata-only architecture that prevents honeypot breaches.

Aqi (consumer AI chat) shows its work with three transparency levels you control

The Incident: What Happened

On 19 January 2026, security researchers discovered that Chat & Ask AI had exposed 380M messages to the public internet. The root cause was straightforward: a misconfigured backend storing full message content created a honeypot that became an attractive target.

This wasn't a sophisticated attack. It was an architectural mistake—the kind that happens when you build fast without governance. Users shared deeply personal struggles, medical concerns, relationship problems, all sitting unprotected.

"The database was accessible to anyone on the internet without authentication, and contained millions of user conversations with AI chatbots."

— 404 Media investigation1This is what happens when you prioritise speed over safety. When you're racing to ship features and acquire users, both security and governance feel like something you can address later.

And Chat & Ask AI isn't alone. Security researchers at Appdome analysed over 156,000 iOS apps and found that 71% leaked at least one hardcoded secret — passwords, API keys or access tokens sitting directly in app code. Among the worst offenders were 198 AI apps, including chat apps that exposed user conversations, phone numbers, and login credentials for millions of users3.

On the same day, researchers discovered that Bondu, an AI toy company, left over 50,000 children's chat logs exposed behind a basic Gmail login. Anyone could access transcripts showing kids' names, birth dates, and family details4.

The pattern is clear: infrastructure misconfigurations, hardcoded secrets, no audit trails. Sometimes it's security. Sometimes it's governance. Often it's both.

I didn't build Aqta so you'd stop using AI. I built it so you could keep using AI without gambling with your private thoughts.

Why Retrofit Fails

I've seen teams try to retrofit governance onto existing AI products. It never works cleanly. Meta paid €1.2 billion in GDPR fines and had to rebuild cross-border data infrastructure. Uber's post-2016 compliance overhaul took three years. Healthcare AI companies seeking FDA clearance often discover their "move fast" architectures can't provide the audit trails regulators demand.

"Governance can't be retrofitted. It has to be the foundation."

That's why I built Aqta with governance first. It's the governance infrastructure that makes privacy-first AI possible. Every feature in Aqta was designed with privacy, auditability, and safety as core constraints, not afterthoughts. This follows GDPR's Article 25 principle of "data protection by design"6 and the NIST AI Risk Management Framework7.

Aqta's governance dashboard provides real-time monitoring and audit trails

The Aqta Difference

When you use Aqta, you're not just getting an AI governance tool. You're getting a platform that:

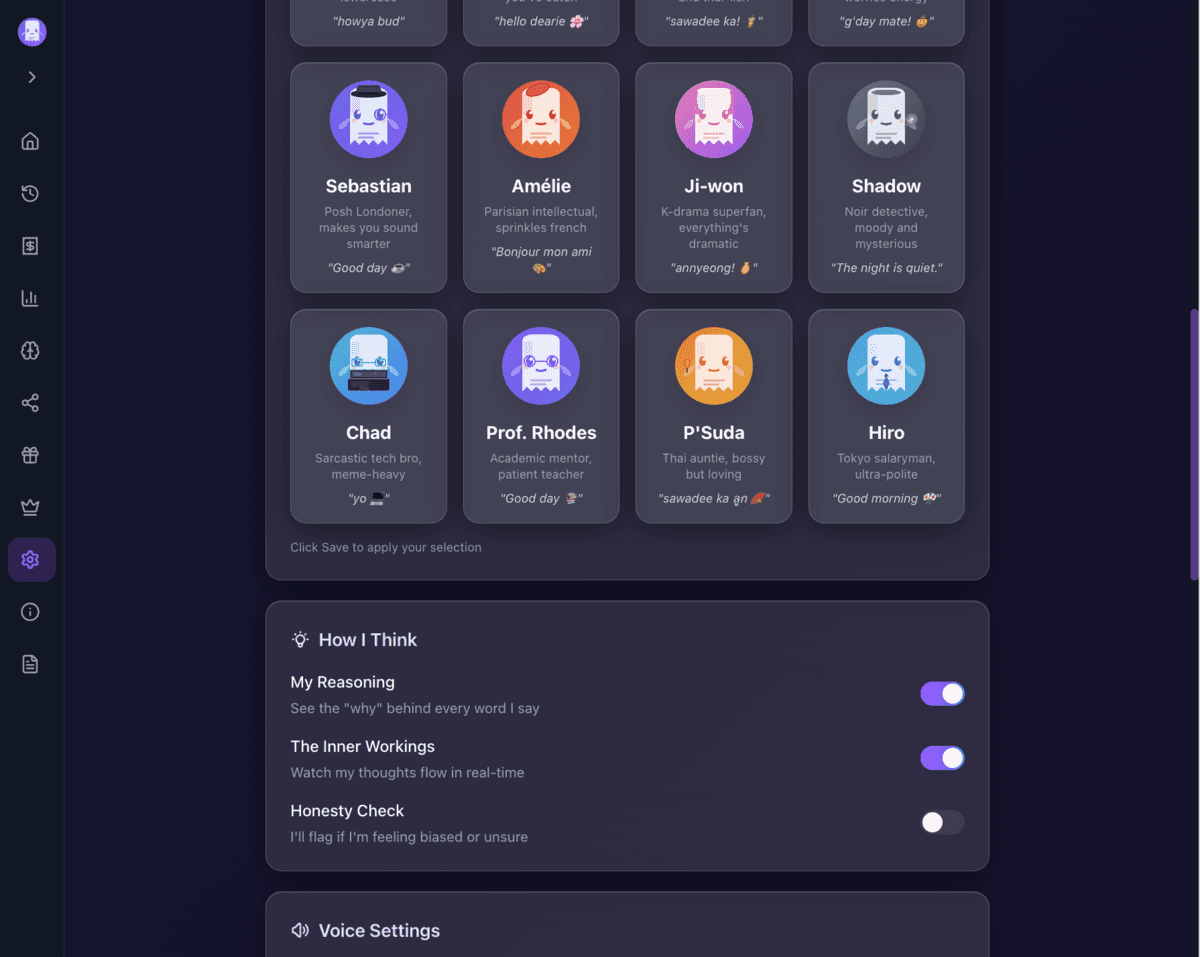

- Shows its work with three transparency levels: reasoning, real-time thoughts, or bias detection

- Matches your style with 16 personalities from chatty Dublin Gen-Z to thoughtful Granny

- Speaks your language with support for 11 languages globally

- Keeps you in control with no surprise updates or humans reading your chats

Most AI tools give you fluent answers and hope you don't ask questions. Aqta shows the work with three transparency levels — you control how much you see. You can see how it reasoned, where it pulled information from, and what it "learnt" along the way, or turn it all off if you just want clean answers.

Aqi's transparency settings (consumer app) let you control how much you see behind the AI's reasoning

What This Means for AI

The Chat & Ask AI leak won't be the last. As AI becomes more personal and more powerful, the stakes get higher.

That's why I built both Aqi and Aqta. Aqi (consumer AI chat) proves that transparent, accountable AI can work. Aqta (enterprise governance) scales the same principles for teams deploying AI in healthcare, finance, and other regulated industries.

Aqi + Aqta: Aqi is the consumer AI chat app (transparent reasoning, privacy-first). Aqta is the enterprise governance platform that powers it — designed for healthcare, finance, and regulated industries. Both use the same metadata-only architecture.

Even with perfect governance, leaks can still happen — insider threats, zero-days, supply chain attacks. But we can stop treating security and governance as afterthoughts. We can make the preventable failures actually preventable.

Trust must be designed in from day one, not bolted on after the breach.

Experience Governance-First AI

Whether you're looking for a transparent AI companion or enterprise governance infrastructure, both are built with the same principle: trust can't be retrofitted.

For Individuals

Try Aqi free — no credit card required

Try Aqi Free →References

- "Massive AI Chat App Leaked Millions of Users' Private Conversations" — 404 Media, January 2026 Source ↗

- "Thousands of iPhone apps expose data inside Apple App Store" — Fox News, January 2026 Source ↗

- "Hardcoded Secrets in iOS Apps Open the Door to Modern Cyber Attacks" — Appdome, January 2026 Source ↗

- "Security Flaw at AI Toy Company Exposed Over 50,000 Chat Logs of Kids" — PCMag UK, January 2026 Source ↗

- Reddit discussion: "Massive AI chat app leaked millions of users' private conversations" — r/technology Source ↗

- "Revealed: 50 million Facebook profiles harvested for Cambridge Analytica" — The Guardian, March 2018 Source ↗

- "GDPR Article 25: Data protection by design and by default" — GDPR.eu Source ↗

- "AI Risk Management Framework (AI RMF 1.0)" — NIST, January 2023 Source ↗

- "Who's Accountable When Healthcare AI Makes a Mistake?" — Aqta Blog, January 2026 Source ↗

Anya Chueayen

Technical founder with full-stack AI infrastructure experience. Previously worked on integrity and governance at social media platforms, solving the messy edge cases between human behaviour and AI ethics at scale. Based in Dublin, Ireland — global perspective on AI regulation.

Related Articles

The Human Supply Chain Behind AI

As models become commodities and AI-generated code floods your stack, the real risk lives in the software and human supply chains beneath them.

Who Is Accountable When Healthcare AI Makes a Mistake

Ireland's Medical Council says doctors remain responsible for AI decisions. But how can they be confident in tools they don't fully understand?