The human supply chain behind AI, and why runtime enforcement is the missing layer

Three AI coding assistants, same task: build a logging function. All three imported deprecated libraries. One pulled in a dependency with a known RCE flaw. None flagged it.

November 2025: Coinbase Ireland fined €21.5M for AML monitoring failures. AI-generated code is moving faster than the humans responsible for it.

Models are getting cheaper. Code generation is getting faster. The software and human infrastructure underneath is getting riskier.

The model is the easy part. What runs beneath it is not.

Models as commodities, code as floodwater

Foundation models are trending toward commodity economics, as costs collapse and performance converges, the differentiator shifts from "which model?" to "what do you build on top?"

If it is cheap to ask models for code, you will get a lot of code. Copilots, internal agents and code‑generation tools make it trivial to produce new services, scripts and glue logic across the organisation. Every generated function can pull in new dependencies, touch new APIs and extend your blast radius.

The economics point to a rapid expansion of AI‑generated code and agentic behaviour; the question is how to keep it safe once it is running.

A software supply chain that outpaces manual review

At the same time, the software supply chain is already beyond human scale. A 2024 CVE review shows 40,009 published vulnerabilities in a single year, up nearly 39% from 2023, roughly 108 new CVEs every day. Many are in open‑source dependencies and transitive packages, precisely the libraries AI tools are happy to import without context.

Even if your AI assistant writes "perfect" code, the libraries it pulls in may hide serious vulnerabilities. Security and platform teams are already struggling to:

- Track which microservice is using which version of which library.

- Understand how AI‑generated changes alter runtime behaviour across complex systems.

Static scanning alone is not enough. What matters is how the AI‑generated code and agents behave in production: what they call, what data they touch, what loops they get stuck in.

The people behind the model

The software supply chain is one problem. The human supply chain is another. Every major model was shaped by workers who labelled, annotated and moderated training data, often for poverty wages with no safety net.

The EU Corporate Sustainability Due Diligence Directive (CSDDD) requires large companies to address human‑rights impacts across their value chains, including AI training subcontractors.

A 2023 investigation found data labellers paid $1.32–$2 per hour to review graphic content for a major AI system. The companies deploying those models carry that risk whether they know it or not.

Most companies cannot trace from "we use this model" to "here is who built it and under what conditions." That gap is an ethical and reputational exposure, not just a procurement footnote.

We want to be honest about what this means for runtime governance. A receipt at the call boundary can name the model and the version. It cannot tell you what the annotators were paid. That is a procurement, contracting and policy problem, not one that signing a JSON envelope solves. The receipt makes the model choice auditable. The decision to use a model trained under poor conditions still sits with the buyer.

What runtime receipts can and can't do

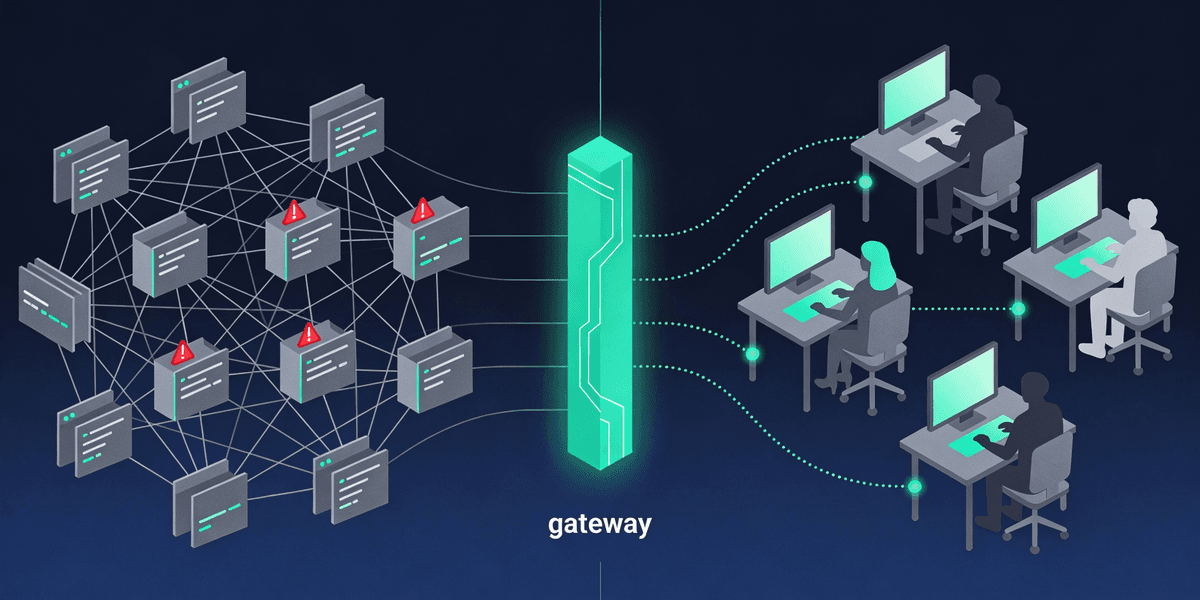

AqtaCore sits in front of your AI agents and model calls. We want to be precise about the layer it operates on, because the rest of the stack still matters.

What we built

Every call through AqtaCore produces an Ed25519-signed receipt: which model, which tools, which APIs touched, at what cost, with what outcome. The receipt is verifiable offline with a published public key. Policy runs on every call (budget caps, loop detection, model-allowlist enforcement), not in a quarterly audit. Each model endpoint can be tagged with its hosting region and data-retention policy so the policy engine has those to enforce against.

What it proved

In the coding-assistant experiment above, each assistant’s call would produce a receipt naming the model that generated the suggestion. After a deprecated library is imported, the receipt history names which model proposed which import, at what cost, against which policy. That is not a defence against the import happening. It is an audit trail that survives the engineer leaving and the model version being retired.

Where it stops

A receipt at the call boundary does not run static analysis on the generated code. It does not detect a malicious package on import. It does not see what your dependency does at runtime once it is in the binary. SBOMs, static analysis, sandbox execution and developer training still matter, and the labour conditions discussed earlier are still a procurement-and-policy problem rather than a signing-key one. Runtime call traceability is one layer in a stack, not the only thing that scales.

The model is a commodity. The infrastructure that makes it trustworthy at scale is not. That is what we are building.

References

- Bank and equity research commentary. "Foundation Models Trending Towards Commodity Economics". 2024-2025.

- Central Bank of Ireland. "Coinbase Ireland €21.5M AML Penalty". November 2025. Source ↗

- Jerry Gamblin. "2024 CVE Data Review". 5 January 2025. Source ↗

- SOCRadar, Bitsight and others. "2024 Vulnerability Record: 40,000+ CVEs Published". 2024.

- TIME Magazine. "OpenAI Used Kenyan Workers on Less Than $2 Per Hour". January 2023. Source ↗

- Business & Human Rights Resource Centre. "Labour Conditions for Data-Labelling Workers Supporting Major AI Systems". 2023-2024.

- European Union. "Corporate Sustainability Due Diligence Directive (Directive 2024/1760/EU)". Official Journal of the European Union, 2024. Source ↗

Anya Chueayen

Founder of Aqta. Before this, I worked on integrity at social media platforms, the unglamorous side of AI where human behaviour, edge cases, and ethics collide at scale. That work convinced me that responsible AI needs infrastructure, not just good intentions. Based in Dublin, closely watching how regulation is reshaping what we build and how.

Connect on LinkedInRelated Articles

Who's accountable when healthcare AI makes a mistake?

Ireland's Medical Council says doctors remain responsible for AI decisions. But how can they be confident in tools they don't fully understand?

I built a real-time AI agent that sees your screen and does the clicking

Building Spectra, a real-time AI agent powered by Gemini Live API that sees your screen, hears your voice, and does the clicking for you.